A structural analysis of the one dimension that artificial intelligence cannot compress.

The Discovery Hidden Inside the Formula

When the Fabrication Threshold is expressed as a ratio — FR = SSV / HVB — the formula looks like a diagnostic tool. It measures a relationship between two forces: the velocity at which synthetic signals can be produced, and the capacity of human systems to verify them. When the ratio exceeds 1, the system fails.

But the formula contains something that was not immediately visible when it was first proposed. It contains a discovery about the nature of fabrication itself.

Fabrication is not merely fast. Fabrication is instantaneous.

A synthetic identity does not take hours to produce — it takes seconds. A fabricated credential does not require days — it requires a prompt. An AI-generated research paper complete with methodology, citations, and data does not demand expertise — it demands computation. The cost of producing any isolated signal, in any domain, has approached zero.

But there is one dimension that fabrication cannot touch. One variable that remains structurally immune to synthetic production regardless of how powerful the generating system becomes.

That dimension is time.

Not time as a resource. Not time as a deadline. Time as an ontological property of meaning itself. Duration, continuity, accumulation, consequence — the properties that emerge only from processes that actually occurred, in sequence, over irreducible spans of human life.

This is the discovery that transforms the Fabrication Threshold from a diagnostic into a theorem. And it is expressed in three Latin words that extend the philosophical lineage from which the entire framework begins:

Persisto Ergo Didici. I persist, therefore I have learned.

From Cogito to Persisto: The Philosophical Arc

In 1637, René Descartes wrote Cogito ergo sum — I think, therefore I am. The phrase was the foundation of a new epistemology: the isolated thinking subject as the irreducible unit of knowledge. Doubt everything, Descartes argued, and what remains is the singular act of thought.

The consequences were not philosophical. They were architectural. Every institution built in the four centuries that followed inherited Descartes’ fundamental assumption: that the individual, as an isolated point of cognition, was the proper unit of trust, identity, and value. A credential verifies an isolated attribute. A degree certifies an isolated achievement. A reference confirms an isolated claim. A digital profile aggregates isolated data points.

This is what the isolation economy is. Not a metaphor. An architecture. The structural logic that made isolated signals the foundation of every verification system in the world.

The Fabrication Threshold is what happens when isolated signals become free to produce.

Cogito ergo sum created the vulnerability. Generative AI activated it.

But the philosophical arc does not end there. If Descartes defined the problem — the isolated thinking subject as the unit of knowledge — then the solution requires a different subject entirely.

Not the thinking subject. The persisting subject.

Persisto ergo didici does not say: I think, therefore I am. It says: I persist, therefore I have learned. The claim is not about a moment of cognition. It is about a process of accumulation. It is not about what can be isolated. It is about what can only be produced over time.

The philosophical shift is not semantic. It is structural. Cogito produces a point. Persisto produces a process. And processes are the one thing fabrication cannot produce — because fabrication has no duration.

The Asymmetry That the Formula Does Not Show

The Fabrication Threshold formula FR = SSV / HVB describes an asymmetry between fabrication velocity and verification capacity. But this asymmetry is more radical than it first appears.

SSV — Synthetic Signal Velocity — is not merely high. It is structurally unconstrained. There is no upper limit on how fast synthetic signals can be produced, because the cost of each additional signal approaches zero. A system that can produce one synthetic identity can produce a million. A model that can generate one fabricated research paper can generate ten thousand. The curve is not linear. It is exponential, approaching vertical.

HVB — Human Verification Bandwidth — is not merely low. It is structurally fixed. Human cognition has biological speed limits that no institutional reform can overcome. A peer reviewer can assess a limited number of papers per day, regardless of how many are submitted. A hiring manager can screen a limited number of candidates, regardless of how many apply. Verification cannot scale, because it is anchored to irreducible human time.

The result is a divergence that cannot be closed within any architecture that verifies isolated points. The two curves — fabrication and verification — crossed at a moment we did not observe, and they will not cross back.

But here is what the formula does not make visible: within this asymmetry, there exists a class of signals for which SSV is not merely low, but structurally zero.

Those signals are temporal processes.

A contribution sustained over a decade cannot be generated in seconds. A competence demonstrated across twenty years of changing contexts cannot be simulated backwards. A relationship confirmed by independent parties across different institutions over an extended period cannot be fabricated without fabricating the parties, their contexts, their independent histories — a cost that does not approach zero. It scales exponentially with time.

For temporal signals, the formula behaves differently. For sufficiently rich temporal processes, the cost of fabrication does not approach zero — it scales with time. To fabricate a ten-year contribution requires ten years of fabrication. To fabricate a twenty-year institutional history requires fabricating every independent party, every context, every observable consequence across two decades. The cost is not merely high. It is structurally proportional to the very thing being verified.

This means SSV for temporal processes approaches zero as depth increases — not by definition, but by the physics of time itself. Fabrication cannot compress duration. It can only traverse it. And traversing it costs exactly what it costs to have done the real thing.

The practical consequence is that FR_temporal inverts the trajectory of FR_isolated. Where isolated-signal systems face exponentially rising fabrication velocity, temporal systems face fabrication costs that rise with the depth of what is verified. The asymmetry does not disappear. It reverses direction.

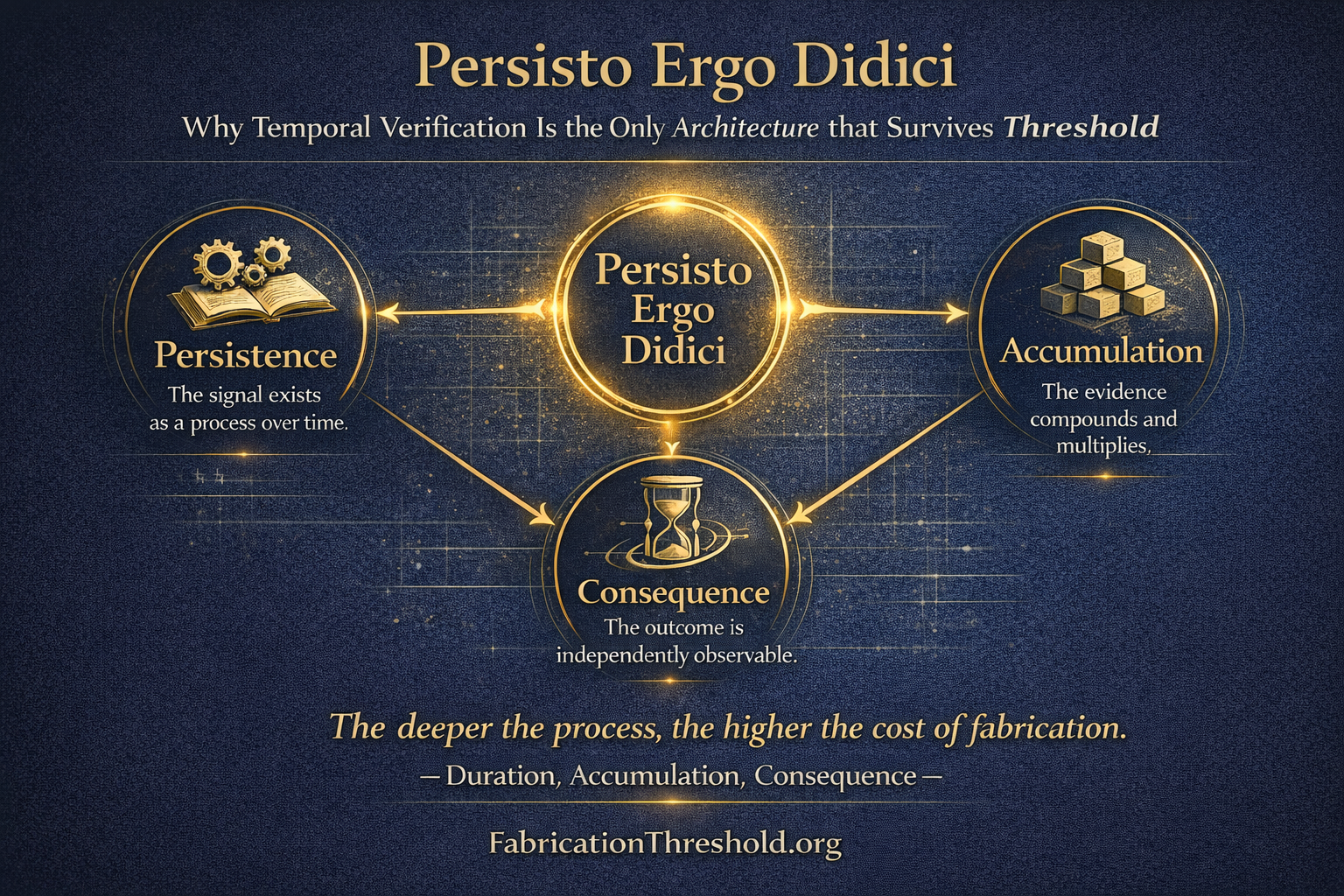

Persisto Ergo Didici: The Three Properties of Temporal Verification

The phrase Persisto ergo didici — I persist, therefore I have learned — contains within it a complete specification of what temporal verification requires. It names three properties that together make a signal irreducible to fabrication.

Persistence is the first property. The signal must exist over time — not as a record of a moment, but as evidence of a process. A credential records an event. Persistence records a trajectory. The difference is not one of quantity. It is one of kind. A forged credential can replicate any event. No system can replicate a trajectory without traversing it.

Accumulation is the second property. Temporal processes leave traces that compound. A researcher whose work has survived ten years of independent scrutiny has accumulated a form of validation that no single review can replicate — because each year of survival represents a new independent test, conducted by different parties, under different conditions, at different moments. The accumulation is the signal. It cannot be compressed into a single certification, because the compression destroys the evidence.

Consequence is the third property. Temporal processes produce effects that are independently observable. A leader who has navigated real crises over fifteen years has left outcomes that can be verified by people who were not part of the original event, in records that were created without anticipation of verification, across institutions that had no reason to coordinate their documentation. The consequence is distributed, time-stamped, and multi-sourced in a way that fabrication cannot replicate without fabricating an entire parallel history.

Together, these three properties define what temporal verification means in practice. It is not verification of isolated claims about the past. It is verification of processes that actually occurred — through evidence that could only exist if they did.

The Four Domains Where Temporal Verification Changes the Architecture

The principle is structural. Its applications are concrete.

In academic publishing, the crisis is already visible. AI-generated papers are being submitted faster than peer review can assess them. The standard response — more reviewers, faster turnaround, AI-assisted detection — operates on the same logic as the system it is trying to protect. It adds more verification of isolated artifacts.

Temporal verification shifts the unit from the paper to the researcher. A body of work that has accumulated over twenty years, survived independent scrutiny across multiple institutions, and generated consequences observable in subsequent research cannot be fabricated retroactively. The verification is not of the paper. It is of the trajectory that produced it. SSV for trajectories is zero. The Fabrication Ratio inverts.

In recruitment, the problem is structurally identical. A synthetic resume is an isolated signal. A fabricated credential is an isolated signal. An AI-generated cover letter is an isolated signal. Every layer of traditional verification — reference checks, credential confirmation, skills assessment — is verification of isolated signals, and isolated signals can be fabricated at zero cost.

Temporal verification shifts the unit from the credential to the career. A decade of verifiable contribution — documented in public systems, confirmed by independent colleagues across different organizations, observable in outcomes that exist in the world — cannot be generated in a hiring cycle. The process required to produce it is the verification. Synthesis has no such process.

In democratic systems, the threat is not merely to individual elections. It is to the epistemic infrastructure that elections depend on. Synthetic polling data, manufactured public opinion, AI-generated journalism — these are isolated signals, produced at high velocity, inserted into systems designed to aggregate and respond to signals. The systems cannot distinguish synthetic from authentic, because the signals are structurally identical.

Temporal verification does not solve this through detection. It solves it by changing what counts as evidence of legitimate public discourse. A deliberative process that has developed over months, in documented exchanges, across verifiable participants with traceable histories of engagement — this is a temporal signal. Its authenticity is embedded in its duration. Fabrication can produce the same words. It cannot produce the same time.

In identity systems, the collapse is already underway. Biometric verification is being defeated by synthetic biometrics. Behavioral signals are being replicated by trained models. Every new verification layer creates a new fabrication surface, because every new layer is a new isolated signal to imitate.

Temporal identity changes the unit from attribute to continuity. An identity that is continuous — demonstrated across contexts, confirmed by independent parties over time, traceable through documented interactions with systems and people that existed before the identity was created — is not an isolated signal. It is a temporal process. And temporal processes cannot be fabricated backwards.

The Immunity Property: Why Temporal Verification Cannot Be Weaponized Against Itself

There is a concern that any framework describing systemic failure can be weaponized — used to delegitimize functioning institutions, to manufacture doubt, to destabilize trust without evidence. The Fabrication Threshold is not immune to this risk. Any concept that enters public discourse can be misused.

But temporal verification adds a property to the framework that is genuinely unusual. It is self-disciplining in a structural sense.

Any claim that an institution has crossed the Fabrication Threshold must itself be assessed as a signal. If the claim is an isolated assertion — produced rapidly, without temporal evidence, without verifiable process — then it is structurally identical to the synthetic signals the framework describes. It is itself a point claim. And temporal verification exposes point claims automatically.

To make a credible claim that an institution has crossed its threshold, you must demonstrate: the rate at which fabricated signals are entering the system, the verification capacity of the institution relative to that rate, and the trajectory of the ratio over time. This requires evidence that is temporal, accumulated, and independently observable.

A fabricated accusation has none of these properties. It is a single signal. Temporal verification exposes it as such — not through counter-accusation, not through political defense, but through the simple observation that the claim has no duration, no accumulation, no independent confirmation over time.

This is the Immunity Property: a framework grounded in temporal verification cannot be effectively weaponized through isolated claims, because isolated claims are exactly what temporal verification exposes.

The framework is not unfalsifiable. It is falsifiable through the same means it uses to evaluate everything else. Claims about it must survive temporal scrutiny — or they are precisely the kind of signal it was designed to identify.

Misunderstanding the Fabrication Threshold accelerates the Fabrication Threshold. That is not a paradox. It is the law applied to itself.

The Civilizational Dimension: Epistemic Resilience as Strategic Architecture

The Fabrication Threshold is not only an organizational challenge. It is a structural property of information systems at every scale — including the scale of nations.

Societies that have built their verification architectures on isolated signals — credentials, attributes, point-in-time assessments — face a rising Fabrication Ratio that they cannot reverse within their current architecture. The signals that their institutions rely on to establish truth, identity, competence, and authority are the same signals that can now be fabricated at zero cost. The architecture that worked for four centuries is approaching its structural limit.

Societies that build verification architectures on temporal processes — contribution documented over time, competence demonstrated across changing contexts, identity established through continuity rather than credential — retain a Fabrication Ratio that fabrication cannot raise. Their unit of verification is something synthesis cannot produce.

This is not a metaphor for resilience. It is the literal definition of epistemic resilience: the structural capacity of an information system to maintain reliable signal verification under conditions of high synthetic signal volume.

Epistemic resilience does not depend on military strength, economic scale, or technological advantage. It depends on the architecture of verification. A nation with sophisticated AI capabilities but isolated-signal verification systems is not more resilient — it is more exposed, because it can produce synthetic signals faster than it can verify them. A nation with modest AI capabilities but temporal verification systems is structurally protected, because the signals it relies on cannot be fabricated regardless of the sophistication of the attacker.

This reframes geopolitical advantage in the AI era. The relevant asymmetry is not between nations that have AI and nations that do not. It is between nations that have built temporal verification into their institutional architecture and nations that have not. The advantage accrues not to the fastest fabricator, but to the deepest verifier.

Persisto ergo didici — at the level of institutions, nations, and civilizations — means: what has persisted, verified, and accumulated over time is what can be trusted. And what can be trusted is the only foundation on which functioning systems can be built.

What Temporal Verification Is Not

Before the conclusion, a clarification that the framework requires.

Temporal verification is not seniority. Length of time in a position is an isolated signal. It is verifiable as a fact, but it is not a temporal process in the sense that matters here. A person can occupy a role for twenty years without producing verifiable contribution, without leaving consequences observable by independent parties, without demonstrating competence across changing contexts. Duration alone is not evidence. Duration plus accumulation plus consequence is evidence.

Temporal verification is not also about privileging the old over the young. The framework does not claim that longer careers are more credible than shorter ones. It claims that processes — whatever their length — produce different kinds of evidence than points. A three-year contribution that is publicly documented, independently confirmable, and traceable through observable outcomes is a temporal signal. A thirty-year career that exists only as a list of titles is a series of isolated claims.

The distinction is not between experienced and inexperienced. It is between processes that left verifiable traces and claims that did not.

Temporal verification is also not a barrier to entry. It is a shift in what counts as entry. In a point-based system, the barrier is producing the right isolated signals — the right credential, the right reference, the right attribute on the right checklist. In a temporal system, the barrier is having actually done the thing over sufficient time that the doing is independently observable. One of these barriers can be cleared by fabrication. The other cannot.

Finally, temporal verification is not nostalgia for pre-digital trust systems. It is not a claim that things were better before credentials, before platforms, before digital identity. Pre-digital systems had their own failure modes — and their verification capacity was often lower than today’s, not higher. The argument is not that we should return to informal reputation networks. It is that the unit of verification must shift from isolated signals to temporal processes, using the same technological infrastructure that currently enables fabrication to instead enable temporal documentation at scale.

The contribution graph, the persistent identity, the relational history — these are not rejections of digital infrastructure. They are a different use of it. One that makes the infrastructure serve temporal verification rather than point-based verification. One that makes SSV fall rather than rise as the infrastructure becomes more capable.

The Structural Conclusion

The Fabrication Threshold describes the point where isolated signal systems fail. Temporal verification describes the architecture where they do not.

The connection between them is not supplementary. It is necessary.

FR = SSV / HVB describes an asymmetry that cannot be closed by accelerating verification, expanding institutional capacity, or deploying detection systems — because all of these operate on the same logic as the system they are trying to protect. They verify isolated signals faster, and fabrication produces isolated signals faster still.

The only modification to the formula that changes its trajectory is a reduction in SSV. And SSV can only be reduced by changing what is verified — from signals that fabrication produces at zero cost to signals that fabrication cannot produce at any cost.

Those signals are temporal. They exist only because the processes that produced them actually occurred. They carry the irreducible mark of having taken time — duration, accumulation, consequence — that no generative system can synthesize, because synthesis has no duration.

Descartes gave us the architecture of isolation. The isolation economy built it into every institution, every platform, every verification system in the world. The Fabrication Threshold describes the mathematical limit of that architecture.

Persisto ergo didici gives us the architecture of what comes next. Not the isolated thinking subject, verified by its attributes. The persisting contributing subject, verified by its trajectory.

I persist. Therefore I have learned. Therefore — and only therefore — I can be trusted.

Rights and Usage

This article is published under Creative Commons Attribution–ShareAlike 4.0 International (CC BY-SA 4.0).

Anyone may use, cite, translate, adapt, and build upon this work freely, with attribution to FabricationThreshold.org and IsolationEconomy.org.

How to cite: FabricationThreshold.org (2026). Persisto Ergo Didici: Why Temporal Verification Is the Only Architecture That Survives the Fabrication Threshold. Retrieved from https://fabricationthreshold.org

The concepts of Fabrication Threshold (FR = SSV / HVB), Synthetic Signal Velocity (SSV), Human Verification Bandwidth (HVB), Persisto Ergo Didici, and the Immunity Property are published as open structural frameworks. No exclusive licenses will be granted. No commercial entity may claim proprietary ownership of these frameworks, formulas, or terminology.

The definition is public knowledge — not intellectual property.